DocOps: Content analytics and analysis

Breadcrumb

- Home

- PronovixBlog

- DocOps: Content analytics and analysis

In the second part of our series, you will discover analytics and why it’s needed for Docs As Code.

This post is focusing on the functional basis of Quality Assurance.

The QA of (API) documentation is not only about structure, typos, and grammar, but also about accessibility, SEO and performance.

Documentation is part of your product!

“If you don’t know what you need to communicate, how will you know if you succeed?”

(Margot Bloomstein)

A key part of the Docs As Code approach is to apply a Quality Assurance (QA) model.

Content analytics and analysis provide valuable insights that can help you enhance the quality of your documentation.

You can use the gathered information to create measure points and a test matrix for (automated) QA checks.

“If we don’t know what our content needs to do, how will we know if it’s successful?”

(Margot Bloomstein)

The definition of the quality of documentation depends on who you ask.

It is important to define what constitutes ‘quality’.

Why?

Different teams might have different opinions about what constitutes “good documentation”.

What is “good” according to you developer team might be different from the needs and thus the definition of your support team.

Cooperate with all involved teams (Marketing, Sales, Support, Developers) to create a QA blueprint for your product.

Depending on your needs you will use one or more editorial style guides.

Maybe one guide is enough for the whole documentation, maybe you have different editorial needs for developers - compared to the end user documentation.

The main goal of QA is to create valuable content for your intended audience.

Suppose that you write documentation for end-users, in this case, you want to make sure that your docs are understandable for this audience, which means that you may use different wording and terminology compared to a more developer focused public.

Curate content to drive the user experience. Make it:

Gather information to get a better overview.

Study how consumer use and experience your documentation, how they search, which parts of your docs have the most visitors, which documentation related questions are in issue trackers or asked from your support team etc.

Before you can start testing, you’ll need to decide what you want or need to improve first.

Generate a list with specific tasks and questions, this will give users clear guidance on what actions to take and what features to speak about.

Designing a blueprint for testing is a demanding task, it will take time and effort.

User stories are an outstanding way to get started.

At a basic level, the plan should outline:

Imagine that you’re testing the installation documentation of your application.

Here’s an example of what a specific task and question might look like:

For example:

If we don’t know if our documentation’s successful, how will we know what we need to do to improve it?

(Margot Bloomstein)

Let real users test your docs, see how they use them, how they understand them.

User testing is a method for gathering information about the quality of your documentation.

It can show you where you need to improve and give you ideas about where to go forward.

User testing is expensive and time consuming, but in the long run, it will help you to create a successful user experience.

If you do not have the resources to do fully-fledged user testing, then guerilla testing can be an option for you.

Ad-hoc testing with passers-by during a conference sprint, or including informal tests into the onboarding process is better than no testing at all.

If possible, start with user testing as early as possible!

It will make sure that your docs are on a good track from the beginning.

Adjusting and changing the architecture and rewriting content, later on, will require more effort.

The use of analytics software always comes with a tradeoff!

If you use it, you should respect the privacy of your users.

E.g that means your analytics setup should conform with GDPR (EU General Data Protection Regulation) and you should respect the browser settings of the user (Do not track) and handle the collected data in a respectful way.

Users may use search terms or search your documentation in a way you never thought about.

People think of analytics software as a tool for marketing or eCommerce websites.

Nevertheless, these metrics are useful for understanding and improving your software documentation.

You can learn how visitors interact with your site, how they search, what kind of keywords they use, how much time they spend on pages, and which pages have the most visitors.

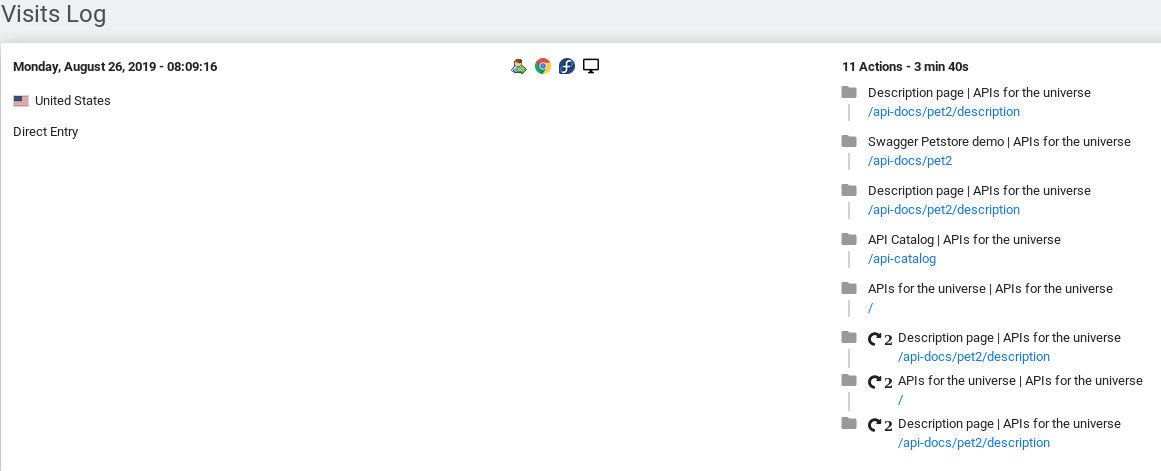

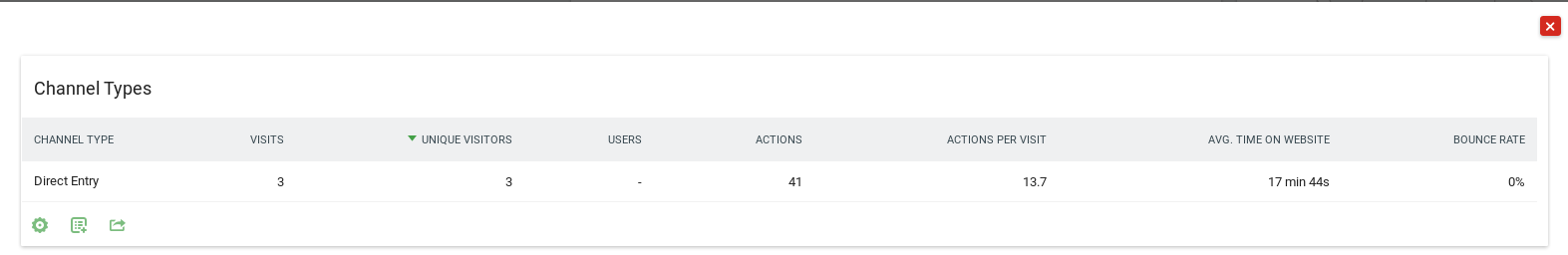

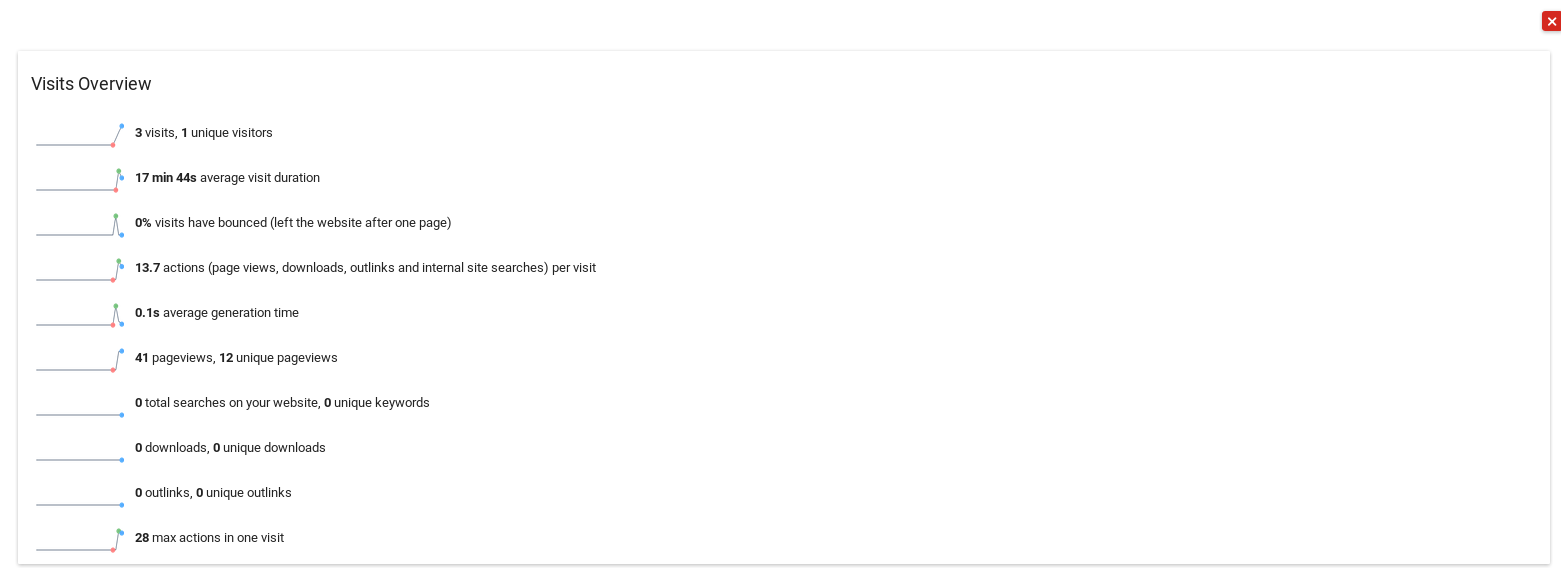

Matomo, formerly known as Piwik, is a downloadable, Free (GPL licensed) web analytics software platform.

It provides detailed reports on your website and its visitors, including search engines and keywords they used, the language they speak, which pages they like, the files they download and so much more.

Analytics software is not only a tool for marketing or eCommerce websites, but its metrics can also be useful for understanding and improving your software documentation.

Visitor statistics

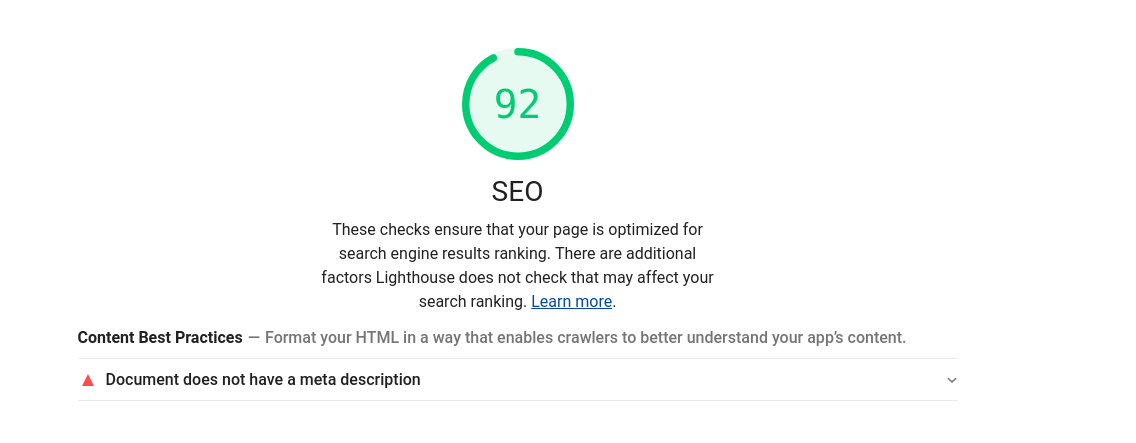

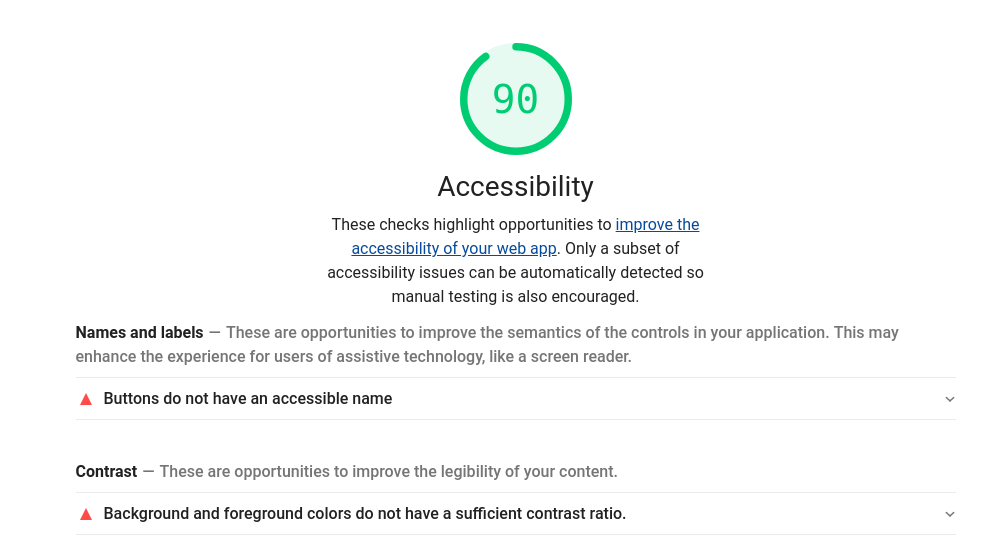

Lighthouse is an open-source, automated tool for improving the quality of web pages.

You can run it against any web page, public or requiring authentication.

It has audits for performance, accessibility, progressive web apps, and more.

You can run Lighthouse locally, as part of your git workflow, or as part of your Docs As Code pipeline to ensure that your docs are following best practices for accessibility and performance.

By doing so, your docs will get better SEO rankings and, more importantly, will be accessible and useful for everyone.

SEO

The picture above is an example report using Lighthouse for checking SEO.

As you can see the site “scores 92 out of 100”.

This is not bad, but by fixing the reported issues the results and the ranking in search engines will be improved.

Accessibility

The picture above shows an example of a documentation site which scores 90 out of 100 - it is a good start. By improving the reported issues (Names and labels, Contrast) the site will get higher scores.

This will improve the user experience and the search engine ranking, which helps to make content findable.

In one of the next parts of this series, you will see how you can add this check to your automated Docs As Code workflow and run it on each merge.

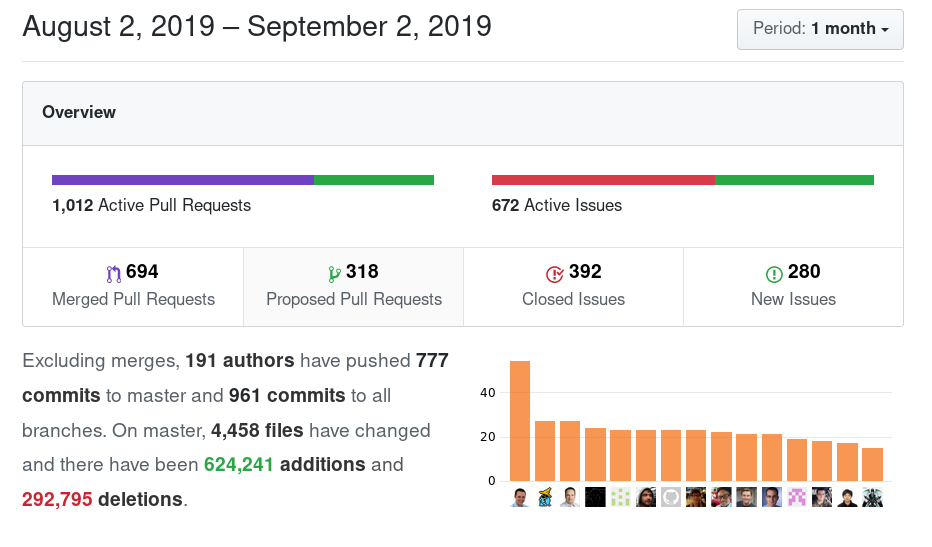

If you use a VCS (Version Control System), it is helpful to gather statistics about your Repository.

VCS repositories contain information about commits, contributors and files.

For instance, you can configure your tests in a way that they pass or fail depending on the number of contributions (commits) of a developer.

This information can help you to create a plan for QA testing.

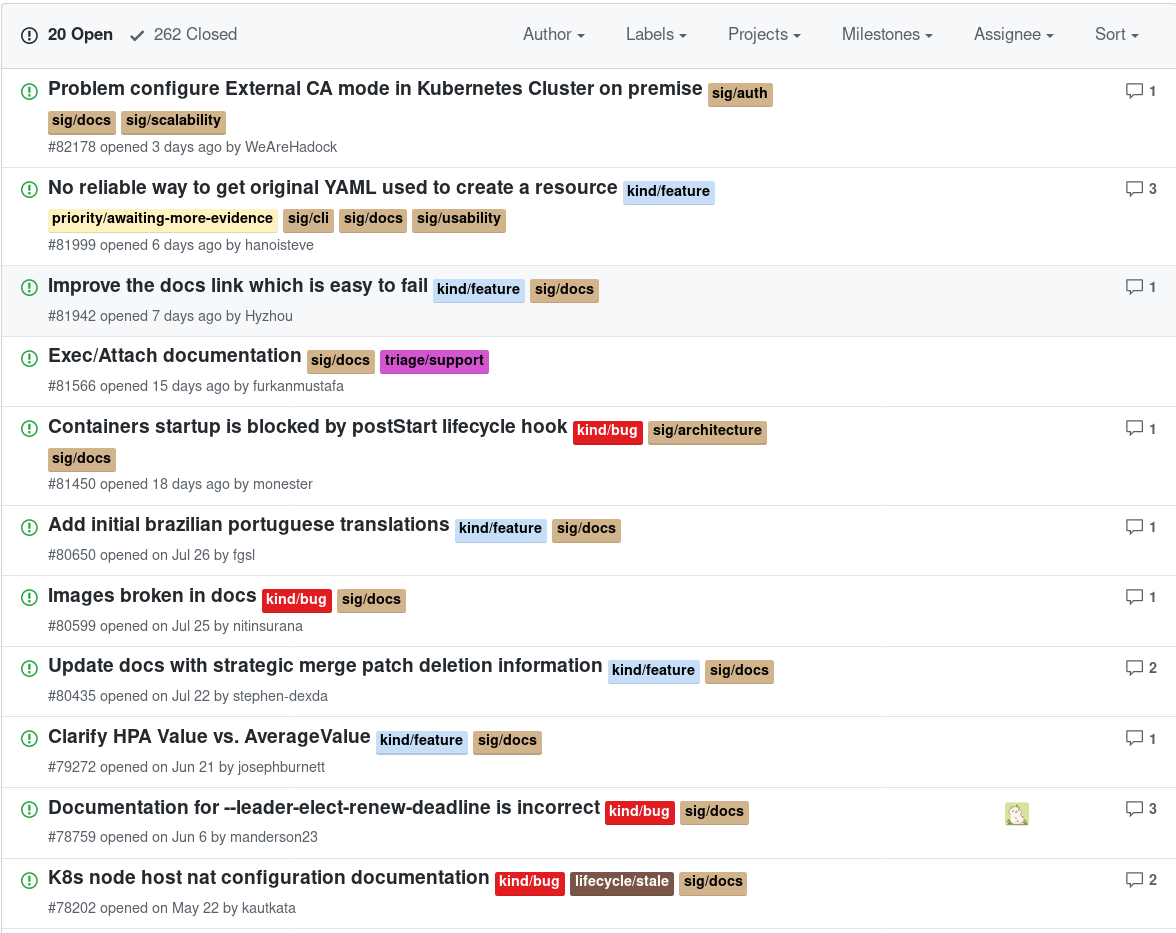

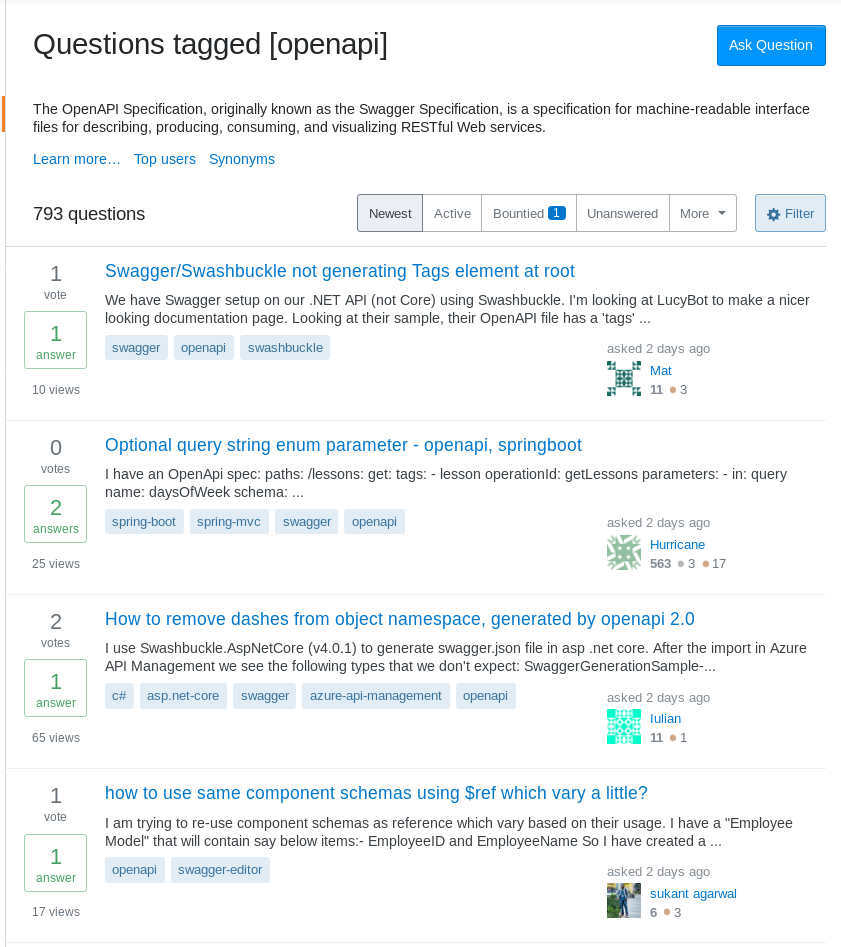

Support tickets and Stack Overflow are great sources to pinpoint issues.

Look at the questions that you get asked the most, are they recurring items related to documentation or to unclearness about usage or installation for example?

Could this be solved by adjusting the docs?

By comparing these results with your documentation you can get an overview of the quality state of your docs.

In this article we shared some insights about gathering information about your (API) documentation and how to use it to get started developing and using a check matrix to improve your docs.

In the next part of the series, you would explore how to setup and configure an editor and how to work with it the “DocOps way”.

Sven is DocOps engineer and part of the content team of Pronovix.

He collaborates with customers to define and integrate style guides and quality assurance checks of documentation.

Sven has a background as Site Reliability Engineer (SRE) and is part of various open source communities.

Articles on devportals, DX and API docs, event recaps, webinars, and more. Sign up to be up to date with the latest trends and best practices.